Hi!

As long-time readers of the newsletter know, I’m a fan of variant sudoku puzzles — sudoku with strange rules like thermometers, ratio dots, cages, and other things that you’re probably already confused by. Many times, I’ve mentioned and endorsed James Sinclair’s Artisanal Sudoku newsletter, and I’m going to do the same today — and then some. Because James has a book out, and I have photographic evidence that you’ll like it.

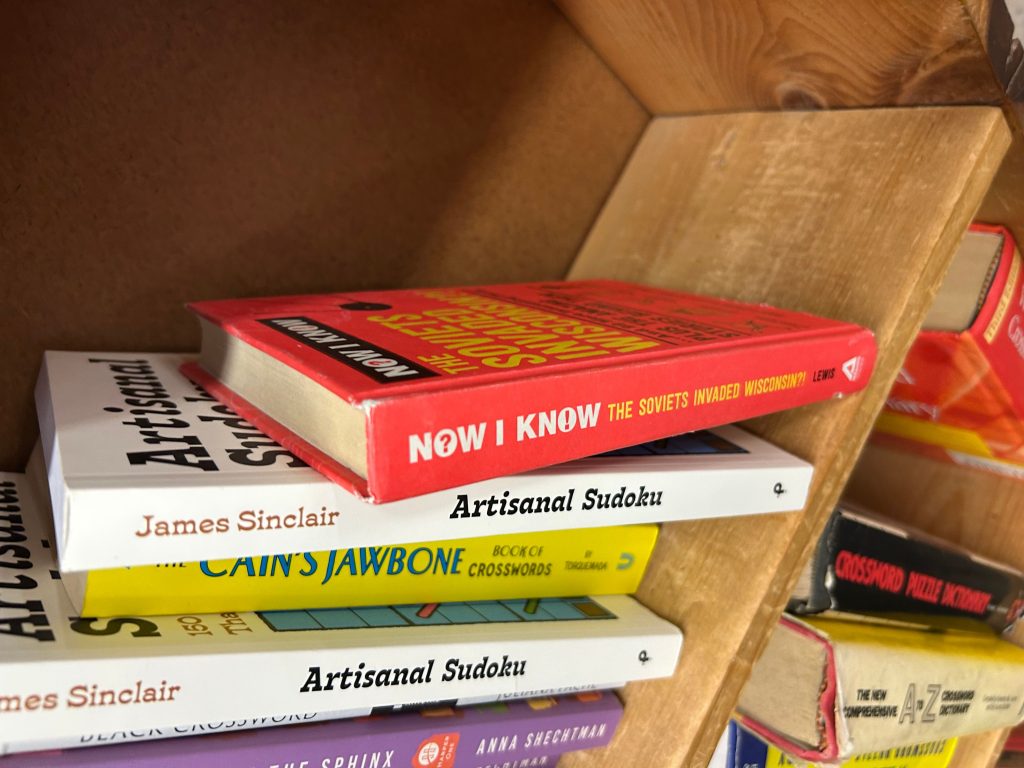

This week, I was in Manhattan and early to an event I was attending, so I stopped by Strand, a famous used book store. I went to see if they had any of my books on the shelves and they did, as seen below. And on the same shelf, next to my book (I rotated the image below), is the book I’m here to share with you today — James’ book of 150 handcrafted variant sudoku puzzles.

I didn’t buy his book because I already owned it — I preordered it when he announced it last fall. Easiest impulse buy in recent memory, too, because his puzzles are fantastic. (I didn’t buy my own book either, but it’s sitting there for $10 if you want a used copy. I considered stealthily signing it but I didn’t have a pen on me, and didn’t want to get arrested.)

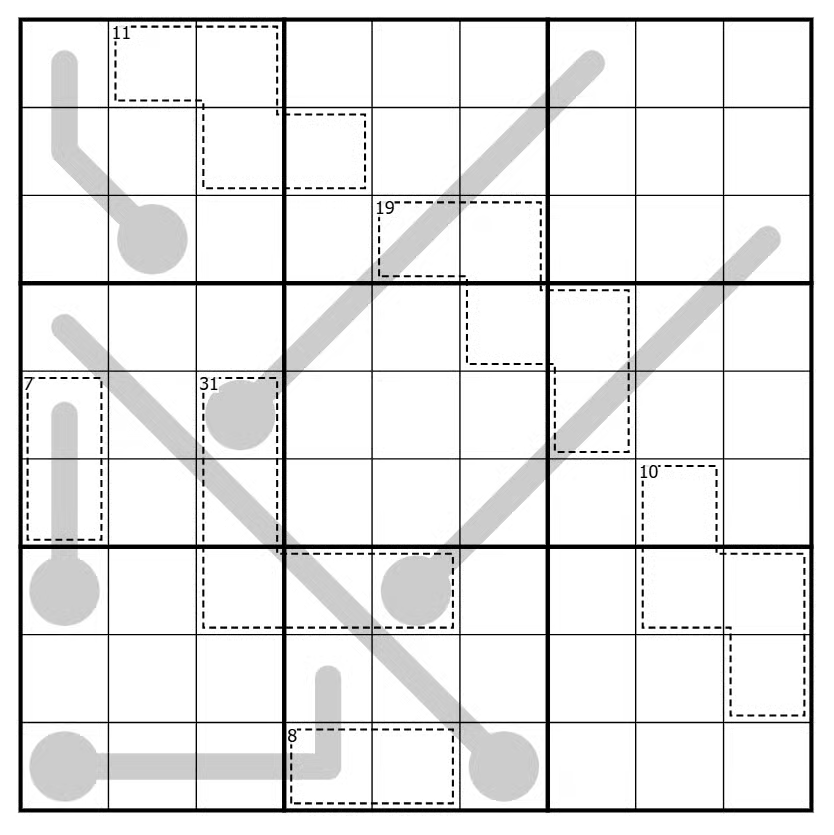

But don’t take my word for it — try one of his puzzles yourself. Here’s one from this week’s newsletter, which you can play online here:

The rules:

- Regular sudoku rules apply — place the numbers 1 through 9 in the grid nine times each, so that no number repeats in any row, column, or bold-outlined 3×3 box.

- The sum of the digits inside each cage is equal to the small number in the top left corner, and digits cannot repeat within a cage.

- Those grey lines are thermometers. Digits on thermometers increase from the bulb end, and not necessarily incrementally.

Enjoy, and if you like it, consider buying James’ book. And if you’re like me, copy the pages so you don’t have to write in the book itself.

The Now I Know Week In Review

Monday: A Life-Saving Football Blooper: He got a kick (heh) out of this one, and it sent him to the hospital — thankfully.

Tuesday: A Planely Obvious Punishment: Stupid human tricks, with somewhat predictable results.

Wednesday: Why You Shouldn’t Tick off a Tiger: It may not get you right away, but that may not matter.

Thursday: The Boy and the Blue Cup: A heartwarming story.

Long Reads and Other Things

Here are a few things you may want to check out over the weekend:

1) “Inside the Quest to Mine the Bottom of the Sea” (New York Times/gift link, 11 minutes, June 2026). This is very cool, albeit controversial.

2) “How Long Does It Take to Plan a Bridge?” (Construction Physics, 6 minutes, June 2026). The “New Tappan Zee Bridge” listed here isn’t too far from me, and I felt like it took forever. But it only took five years to build plus another 13 to plan, which … OK, kind of does feel like forever. It’s not out of the norm, though.

3) “The Secret Garden of Rock-Paper-Scissors” (The Shamblog, 6 minutes, May 2026). What happens when you add more options to the classic three-option game? He did the math.

Have a great weekend, and let’s go Knicks!

Dan

You didn’t “have a conversation” with ChatGPT.

It doesn’t “think you should…” It doesn’t think.

It didn’t “tell you that…” It doesn’t speak.

It doesn’t “feel that the best option is…” It doesn’t feel.

AI is a cheap parlor trick. You provide words, and it provides words back that are most likely to occur alongside the words you provided.

…

A useful reminder for the next time you’re tempted to personify or humanise an LLM.

LLMs are statistical tools. There are some things that the statistics of language can be good at, especially on average: stuff like summarisation, sentiment analysis, pattern identification, and checking for internal consistency.

But they’re just maths. They’re not a person.

It’s not even that they don’t care about you or don’t want to help you. They don’t even go that far: they’re incapable of “caring” or “wanting” in the first place. What they do is take all of the information they’ve ingested, plus their training and prompt, plus the conversation you’d had with them so far, plus a random number, and produce output which is, after a fashion, a prediction of what comes next.

As always: that’s not to say it’s useless. (It’s also not to say it’s always useful.) But as a tool, it’s pretty opaque to most normal people.

Unless you’ve really taken a deep-dive into understanding low LLMs work, they must seem like magic (hell; speaking as somebody who has taken such a deep-dive, they sometimes seem like magic!). I’m sure that some of the time, they must seem like they’re a living thing, or at least an approximation of one.

But they’re not. And it’s important to remember that.

🌟 You're reading this post via the RSS feed, you star! 🌠

The evidence on AI’s effect on those who use it has been coming in, and it’s not good. While it doesn’t effect everyone, it seems to effect most people, and the worst affected, it seems, are the young. Olds have the advantage of growing up in world where they had to learn how to do things themselves. To be sure, phones and social media seem to have had a negative effect on attention span and learning ability, but AI is yet another assault, and it hits the young hardest.

The evidence on AI’s effect on those who use it has been coming in, and it’s not good. While it doesn’t effect everyone, it seems to effect most people, and the worst affected, it seems, are the young. Olds have the advantage of growing up in world where they had to learn how to do things themselves. To be sure, phones and social media seem to have had a negative effect on attention span and learning ability, but AI is yet another assault, and it hits the young hardest.

This excerpt comes from a larger piece from a university professor on the inability of his students to read. The whole thing is worth reading, and the decline is truly precipitous: fundamentally most of them can’t read an entire book, and struggle even with long articles, and they can’t pull out the arguments made. The bit on AI follows:

Another reason for the decline in student reading capability is increasing reliance on generative AI. In June 2025, Nataliya Kosmyna and colleagues at the MIT Media Lab released a preprint titled “Your Brain on ChatGPT.” They divided 54 participants into three groups writing SAT-style essays — one using ChatGPT, the second group using a search engine, the last group using nothing — and monitored brain activity with a 32-channel EEG. The ChatGPT group showed the lowest neural connectivity of the three, with up to 55 percent reduced connectivity compared with the brain-only group, and “consistently underperformed at neural, linguistic, and behavioral levels.” Eighty-three percent of LLM users could not quote a single line from essays they had written minutes earlier. When the LLM group was forced to write without AI in a follow-up session, their brain activity did not bounce back to baseline; the researchers coined the term “cognitive debt” for the lingering deficit.

The fundamental strategy of a lot of tech startups has been to degrade pre-existing infrastructure by under-pricing, for years if necessary, until the old methods are so diminished that they can start charging monopoly pricing. Uber is the classic example: Ubers were far cheaper than taxis for about a decade. Now they’re often more expensive, if the taxis exist at all. Certainly where I live in Toronto, the Taxis did somewhat survive, and cost less.

But overall the strategy was a success, taxi companies were devastated and Uber’s doing great now. All it took was years of losses and predatory pricing: their model wasn’t superior, their product wasn’t superior except having a good app, but they had far more access to patient money, willing to take losses for years to get to the oligopoly pricing end-state.

Neither Anthropic nor Open AI are remotely profitable. Every single query costs more to run than is charged, even to paying clients. A recent increase in prices, still far below running costs, has hit users with massive bills. There’s no evidence AI is better than humans at most tasks, and the real cost (and sometimes, even subsidized, the current subsidized price) is higher than just having employees. AI is often faster, but it makes mistakes humans don’t, and needs to be checked.

But if you make your employees use it they’re going to be degraded and lose the ability to do their jobs well. The more you do something, the more your body and brain optimize for it. The less you do it, the worse you get.

AI’s strategy for replacing workers is threefold: first, sell executives on getting rid of pesky workers for AI, because it’s supposedly easier to manage.

Second: Subsidize while companies lay off the workers and replace them with AI. Once the workers are gone, jack up prices; and,

Third: by encouraging companies to force workers to use AI and to replace workers with AI in some cases, make the workers less capable: stupider. Over time as more and more people become dependent on AI to think and work for them, they will lose the ability to do the work themselves. AI may be shitty, but it will be better than the dullards AI makes its users into.

It’s an ingenious strategy, really. Make people stupid, and replace them with a product which costs more and is inferior to them for most tasks before they were made stupid.

The longer term issue will be that AI isn’t creative: it uses the embodied creativity of past humans, in terms of their writing and their discoveries to simulate intelligence. But as humans produce less and less new creative work, AI will be reduced to eating its own results, and indications are that leads to model collapse: AI’s are dependent on human, and by making humans redundant and stupid they will themselves become stupider and less effective over time.

We live in a time where we can’t look ahead, ever, at technology and make even the smallest effort to control the end results, it seem. At least in the West. Or, rather, we refuse to deal with obvious negative issues if doing so means a few people won’t be able to get as filthy rich.

Dumb.

And soon we’ll be even dumber.

What I write here is for the benefit of everyone, but alas, I live in capitalism and I, and the site, take money to keep running. If you value the writing here and can, please subscribe or donate.

A great many, and perhaps the majority of Americans now between their late twenties and early sixties, have spent time in Mister Rogers’ neighborhood. My own period of regular visitation would have been in the nineteen-eighties, a decade when Fred Rogers introduced his preschool-age viewers to guest stars from Lou Ferrigno, in and out of Incredible Hulk makeup, to a ten-year-old boy with spina bifida. He also took on geopolitical issues, up to and including mutually assured nuclear destruction, and social ones, as on the memorable “divorce week” of 1981. Such topical broadcasts were mixed in with re-runs produced as far back as 1969, the year Mister Rogers got the country’s attention by inviting Officer Clemmons to share his wading pool.

What those of us then tuning in didn’t see was anything from the first, black-and-white season of Mister Rogers’ Neighborhood, which comprised an astonishing 130 episodes that aired in 1968 alone. You can watch the series premiere at the top of the post, just recently uploaded onto the show’s new official channel.

It may come as a shock to see a 39-year-old Mister Rogers, whom most of us remember as the embodiment of avuncularity or even grandfatherliness. But what’s even more striking, if unsurprising, is that his onscreen persona, with its disinclination to talk down to children, never really changed. That surely owes to its apparent identity with his offscreen persona: as he liked to put it, “kids can spot a phony a mile away.”

“Aside from clips and compilations,” writes the New York Times’ Sopan Deb, “the channel will make a selection of full-length episodes available globally for the first time as well as some that haven’t aired in several decades on PBS stations.” With the show’s 60th anniversary coming up the year after next, the time does seem right to make as many of its 895 episodes as possible available to a new generation. As of now, the channel also offers the episodes with Officer Clemmons and the pool, Koko the Gorilla, and the mesmerizing look inside the crayon factory. There’s even the crossover between Mister Rogers and Bill Nye the Science Guy from 1997, by which time the latter had become a television icon to us millennials. Though we probably didn’t catch his visit at the time, we can now keep it bookmarked to show our own kids — assuming they don’t discover it first.

Related Content:

Mr. Rogers Takes Breakdancing Lessons from a 12-Year-Old (1985)

Mr. Rogers’ Nine Rules for Speaking to Children (1977)

Mr. Rogers Introduces Kids to Experimental Electronic Music by Bruce Haack & Esther Nelson (1968)

Watch the First Episode of Sesame Street and 140 Other Free Episodes

Based in Seoul, Colin Marshall writes and broadcasts on cities, language, and culture. He’s the author of the newsletter Books on Cities as well as the books 한국 요약 금지 (No Summarizing Korea) and Korean Newtro. Follow him on the social network formerly known as Twitter at @colinmarshall.