In the past couple of weeks I’ve had 2 patients contact me because they were worried: their V02 max was decreasing. Their data were based on smartwatch imputations, which are notoriously imprecise. But the problem is much bigger than that. In this edition of Ground Truths I’m going to get into the difference between cardiorespiratory fitness and V02 max, which are remarkably different for the way they are measured, the datasets that assess them for functional significance and outcomes for healthy adults, and how we got into this craze.

How They Are Measured

Cardiorespiratory fitness (CRF) is a real world assessment of a person activities, such as walking or on a treadmill, a reflection of a person’s resting metabolic rate, measured in metabolic equivalent of task (MET) units with 3 recognized levels of intensity : Light (<3.0 METs), example slow walking; Moderate (3.0-5.9 METs), example brisk walking, 3-4 miles per hour; and vigorous intensity (>6.0 METs), example jogging. 1 MET is the energy used in sitting or resting; 10 METs requires 10-fold the energy expenditure. CRF integrates cardiovascular, lung and musculoskeletal functional capacity.

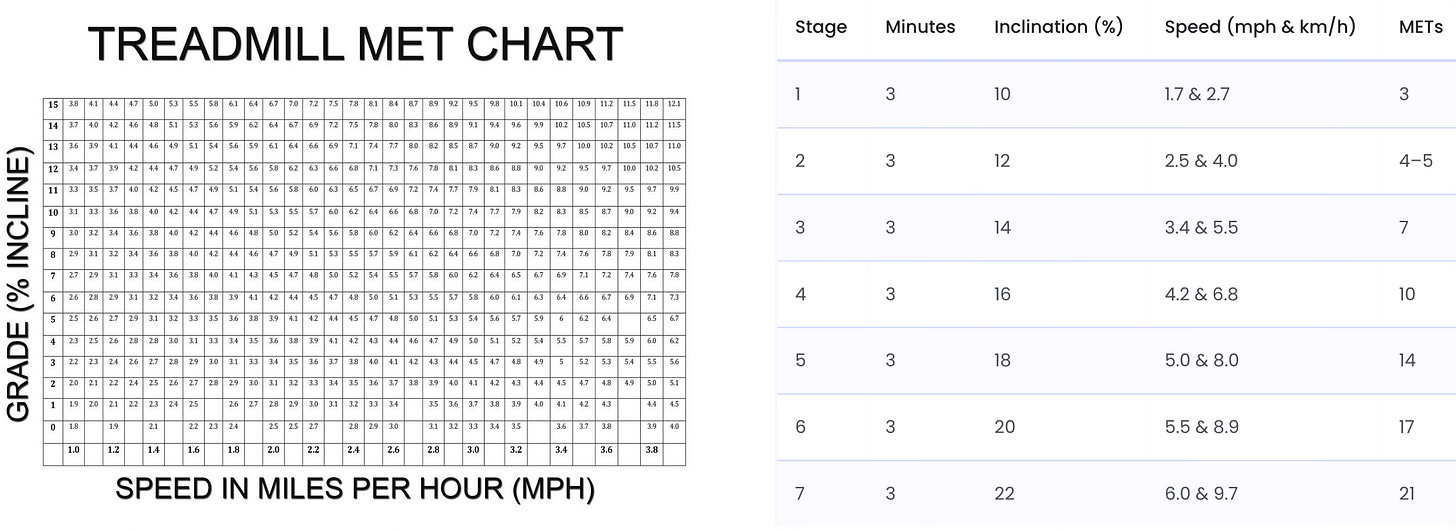

There are multiple methods to calculate your METS, including a standard treadmill MET chart (below left) that plots speed and incline, use a formula if you are doing the Bruce treadmill protocol or the chart below (right), or using heart rate (with any aerobic activity, such as bicycling or jogging) with the formula: METS=0.05 X heart rate+2. So if your HR got to 140 that would be 9 METS. For every increase in heart rate of 10 beats per minute, there’s about a 1 MET increase.

Maximal oxygen uptake (V02 max) is only accurately determined as a performance lab test with a metabolic cart, trained technicians, a specialized tightly fit mask that captures every molecule of inhaled oxygen and exhaled C02 on a ramped treadmill or stationary bike exercise protocol until absolute exhaustion. This is the ceiling of aerobic power achieved via direct gas exchange. A V02 max test costs about $150 for a standard assessment in a university lab.

V02 max by wearables are obviously not measured by gas exchange or directly, but instead through various imputations based upon population-based algorithms of heart rate and movement (GPS/accelerometry). Studies have assessed the Apple Watch, Garmin Fenix 6, and Fitbit with a mean absolute percentage error of 7-16%. Overall, they have been found to consistently underestimate V02 in fit people while overestimate in unfit individuals. They also rely on optical heart rate (which may be inaccurate in people of color), device positioning and wrist anatomy, and can be influenced by such factors as hydration status, altitude, and ambient temperature. Typically, a 6-minute walk is the basis for a wearable to provide the user a new V02 max result. That may not be at all representative of an individual’s exercise capacity.

The Datasets For Assessment

Datasets for Cardiorespiratory Fitness

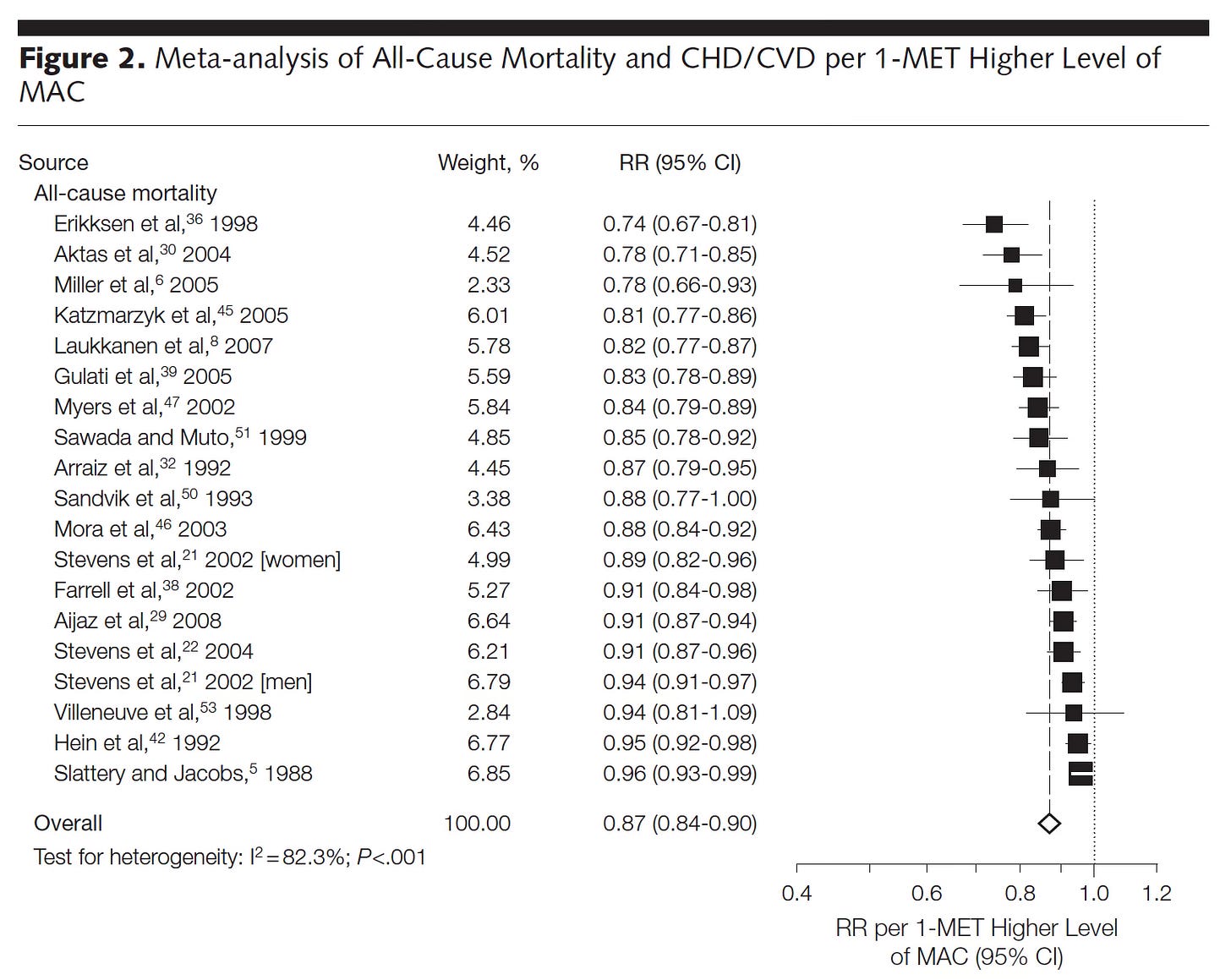

In JAMA 2009, a meta-analysis of 33 studies of cardiorespiratory fitness was published for the relationship to all-cause mortality in a total of 102,980 participants. A better CRF (per 1 MET higher) was linked with a lower all-cause mortality (Figure) and individuals who had achieved 7.9 METs had substantially less all-cause and cardiovascular mortality. One MET higher CRF was associated with a 14-15% reduction of mortality.

In 2016, the American Heart Association issued a scientific statement on CRF and asserted it should be regarded as a clinical vital sign, reviewing all of the published data to that point.

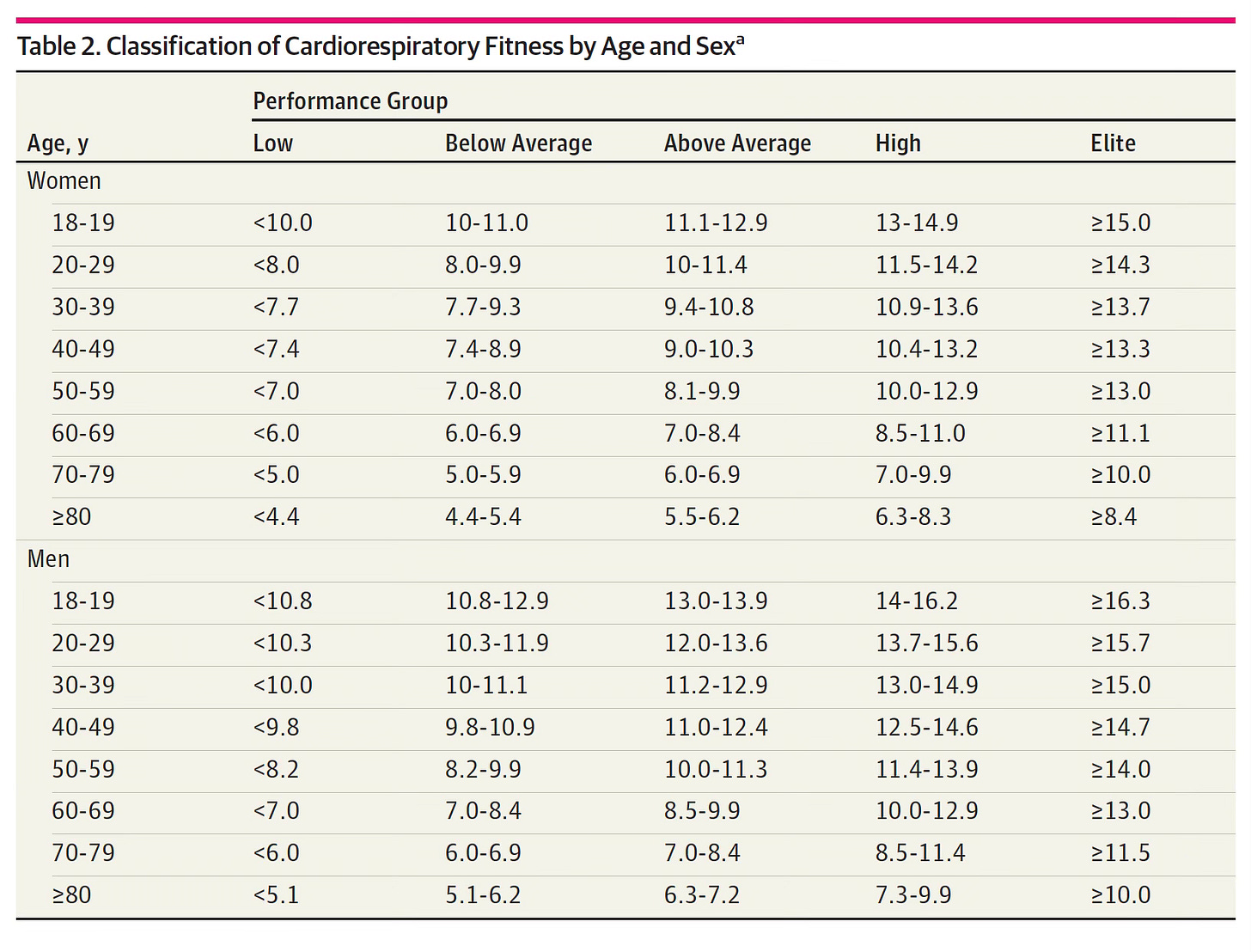

In 2018, Mandsager and colleagues from the Cleveland Clinic published their data from 122,007 consecutive patients who underwent exercise treadmill testing and had long term follow-up for outcomes.

Here is the Table of METS performance by age and sex. You can see there are 5 categories (columns) from low to elite.

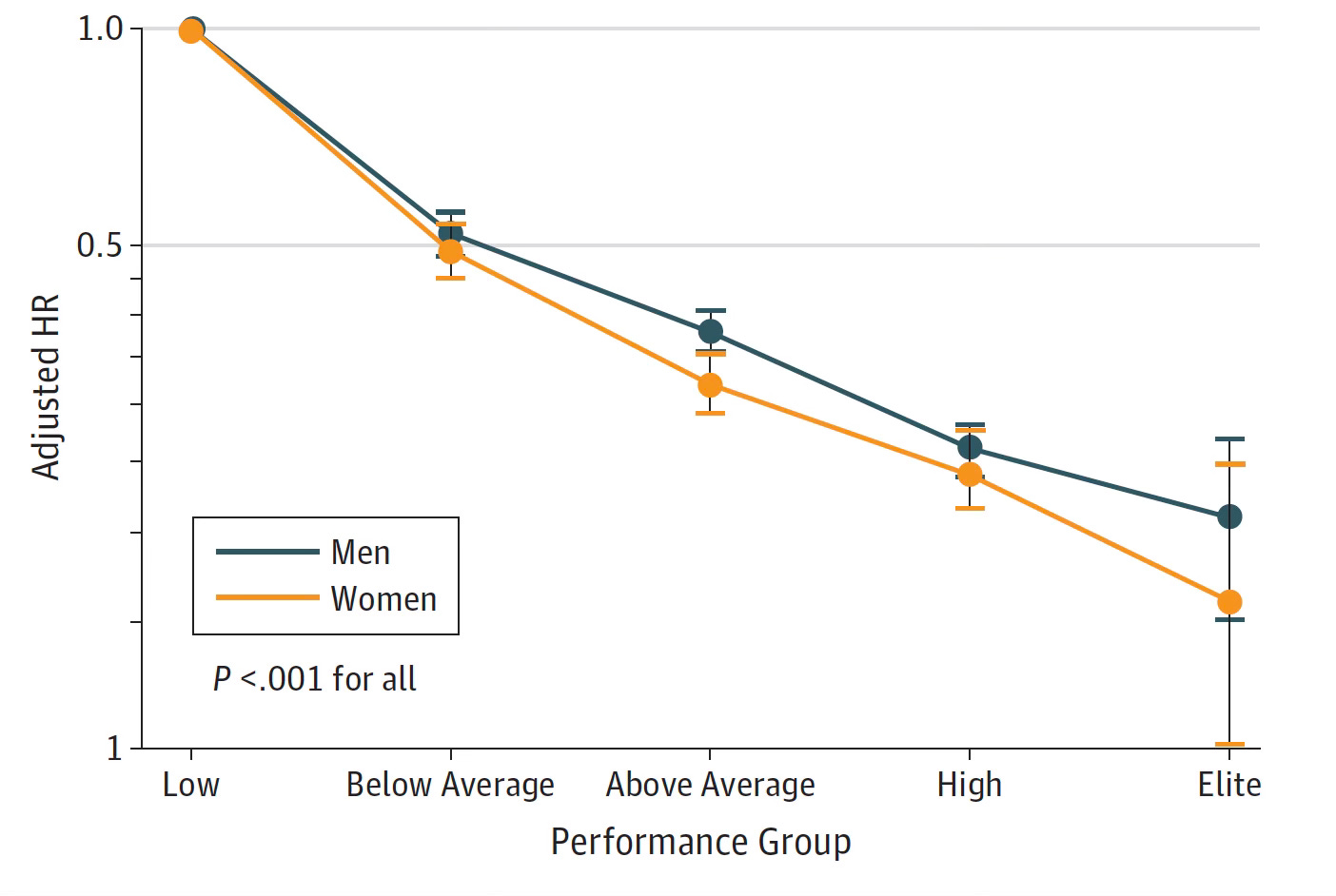

All-cause mortality by sex and the 5 levels of performance are plotted below. The hazard ratio of 1.41 (about 40% increases risk of all-cause mortality) for above average vs below average was the same as the risk of smoking or diabetes. The hazard ratio for mortality from low to elite was more than 5-fold. The favorable impact for women beyond men for METS was seen for each performance group. These results were adjusted for potential confounding variables.

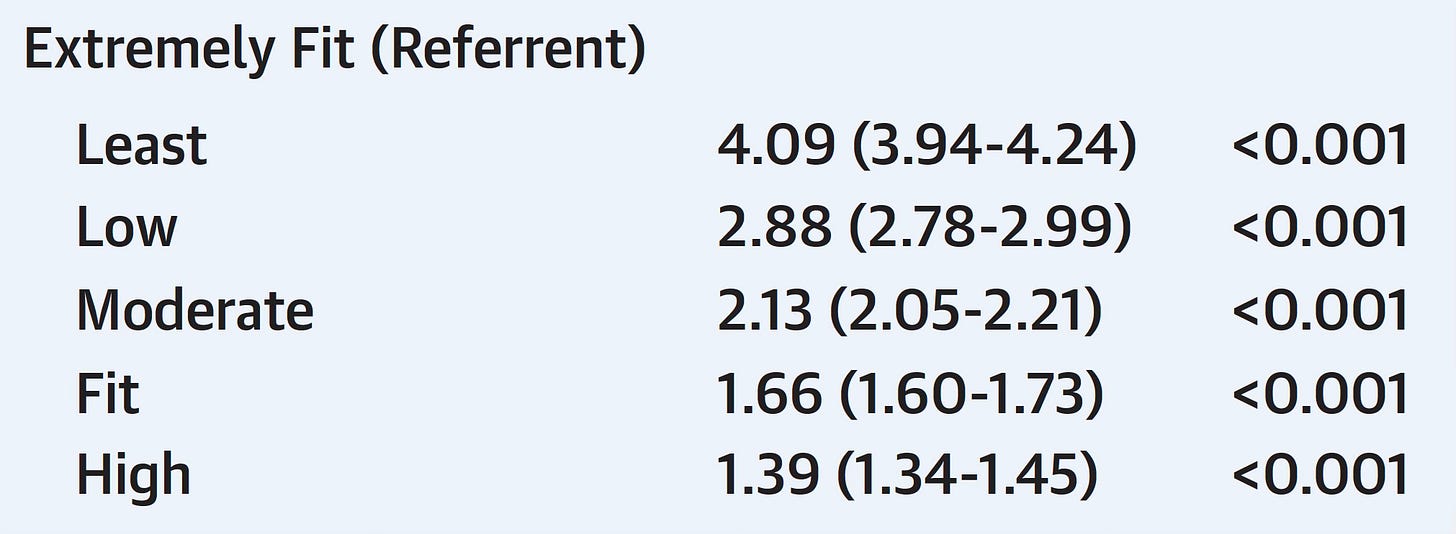

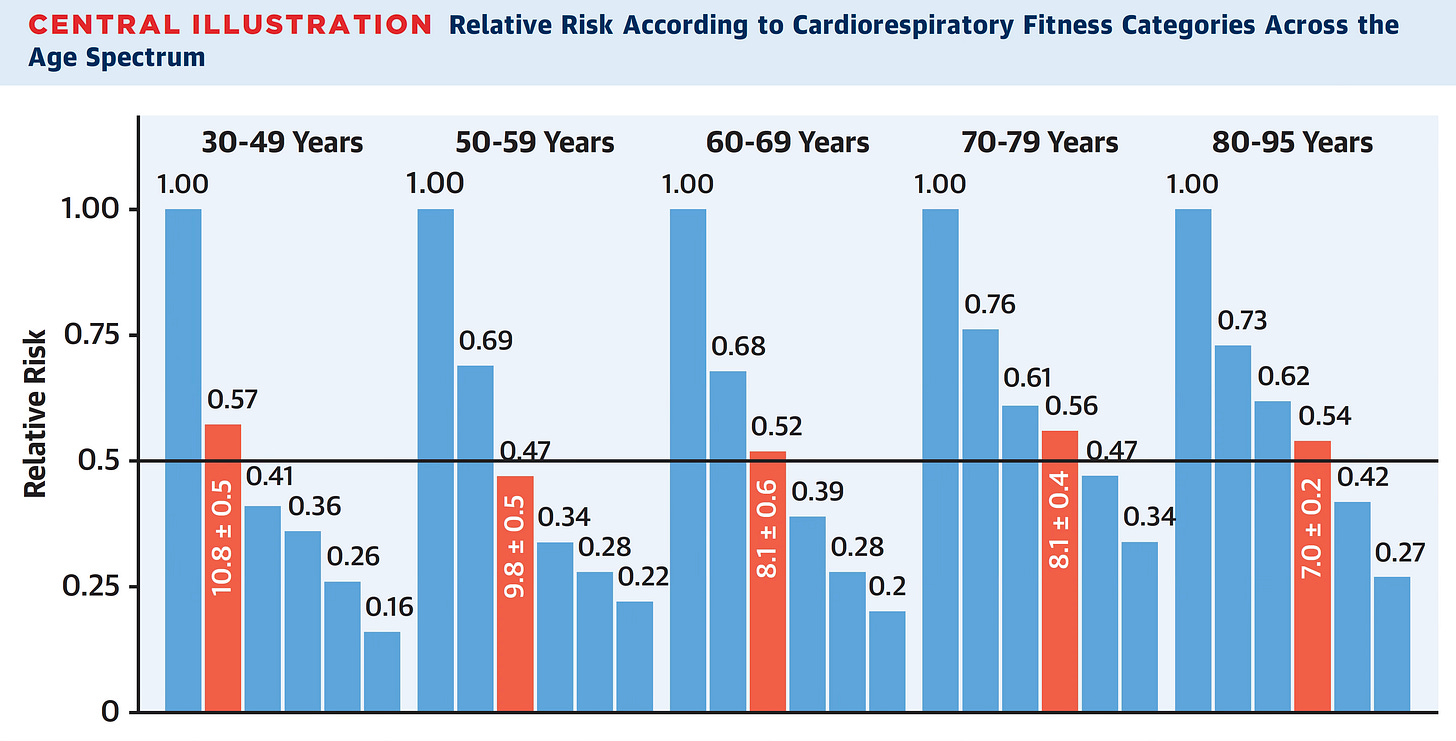

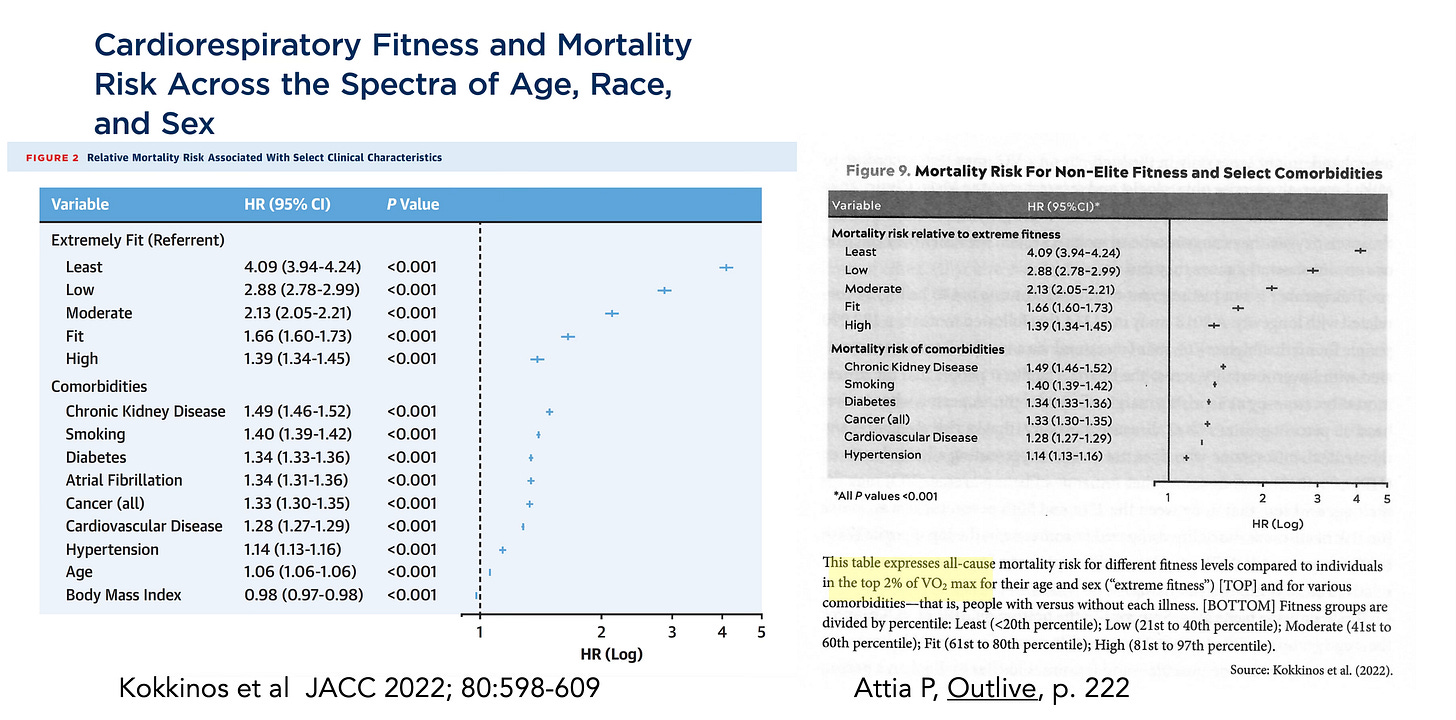

In 2022, Kokkinos and colleagues published CRF exercise treadmill data for over 750,000 veterans aged 30 to 95 years with a mean follow-up of 10.2 years. The analysis was based on 6 categories of MET performance but the hazard ratios were similar to the Cleveland Clinic data (e.g. extremely fit vs lowest 4-fold in this study, 5-fold hazard in the prior one).

Here is a good summary graph of that study. In both there was no risk of increased mortality at the highest fitness strata—in fact it was consistently lower for each age group.

Datasets for V02 Max

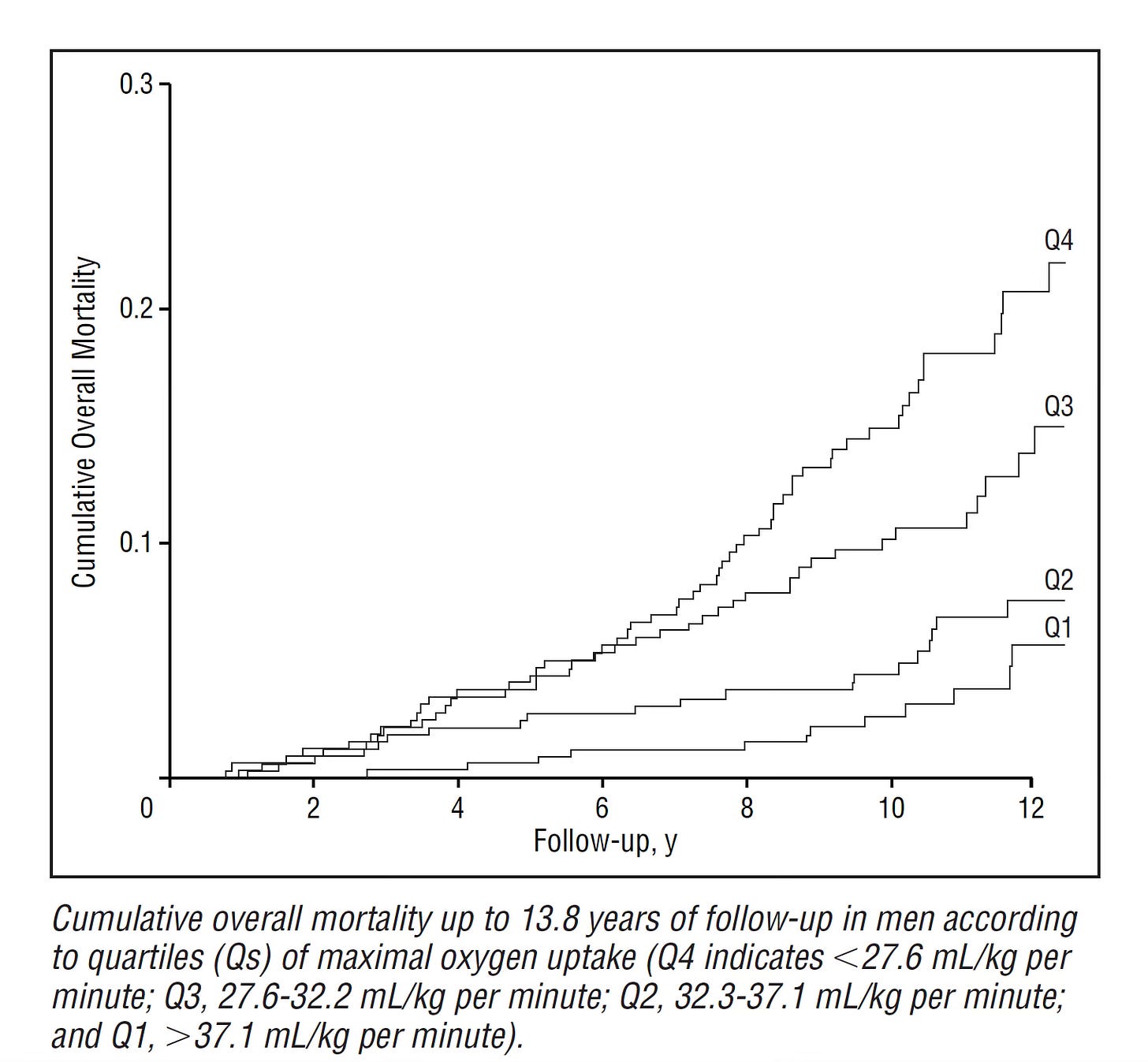

There are limited data for direct measurement of V02 max and outcomes. The 2001 Kuopio study from Finland of 1,294 men with 10.7 year follow-up did measure V02 max directly once at baseline along with a symptom-limited exercise tolerance test on a bicycle ergometer. The relationship of V02 max (by quartiles) to all-cause mortality is shown below.

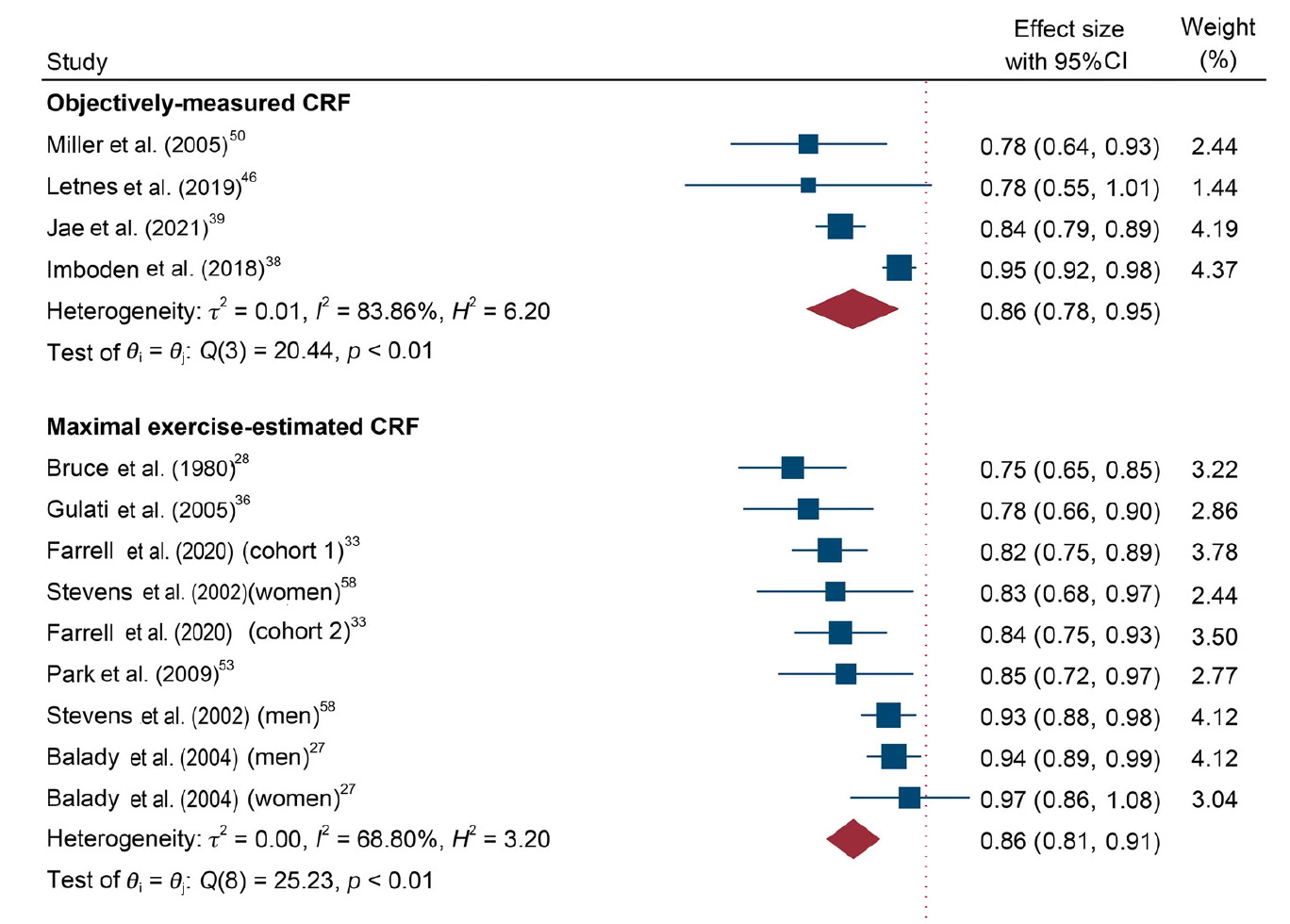

In a 2024 meta-analysis of 42 studies with V02 max (as categorized as “objectively measured CRF”) and estimated CRF for prediction or all-cause and cardiovascular mortality, the results were remarkably similar (cardiovascular mortality, 14% reduction, graph below) but notably there were 234-fold more participants with exercise CRF than by V02 max measurements, or >99% of the data is derived from METS. That is to say, nearly all the data we have for link to outcomes comes from CRF, not V02 max.

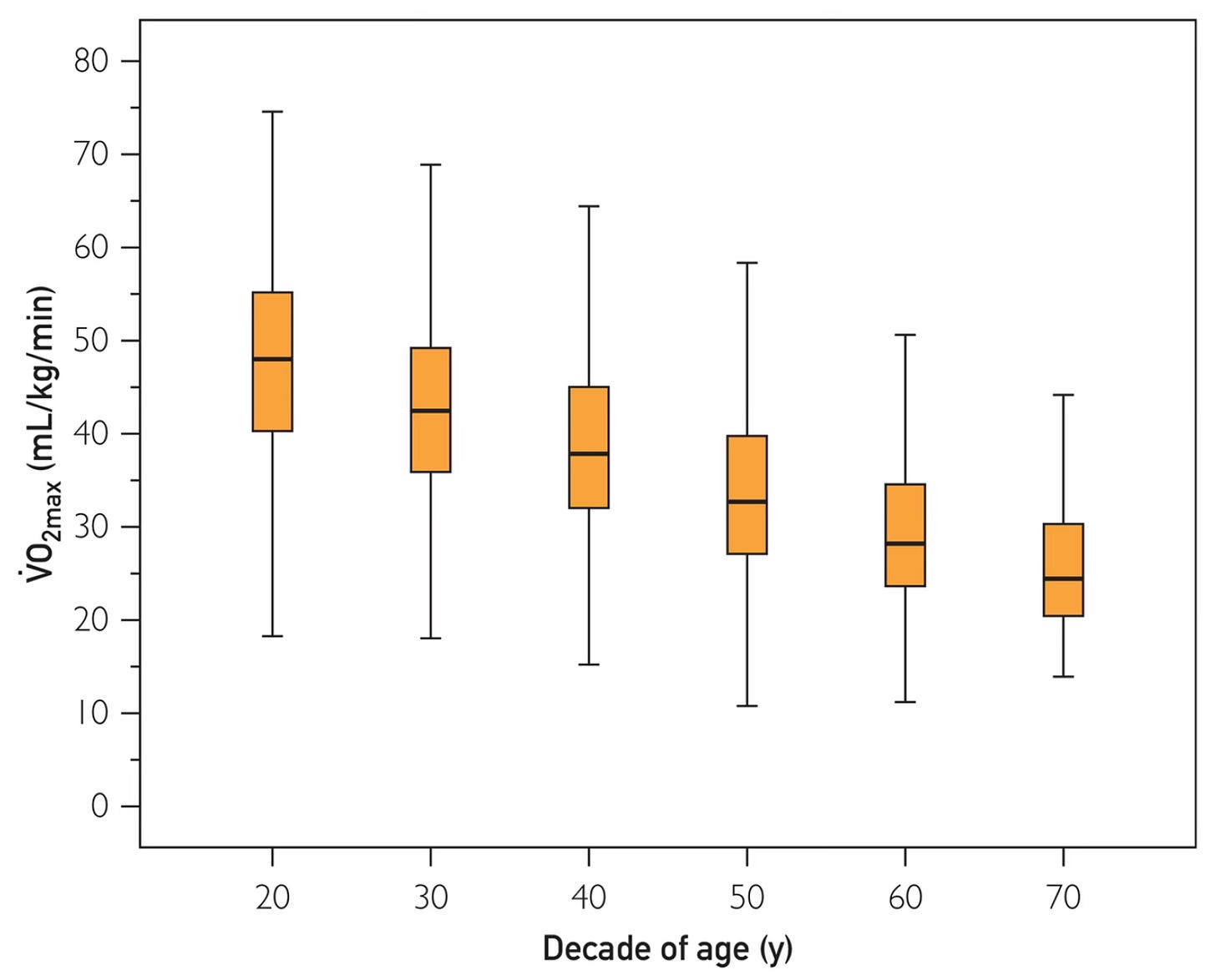

Reference standards have been published by age group for V02 max. For more information such as by sex, please check the link.

There are other specific studies in heart failure ,chronic obstructive pulmonary disease, pulmonary hypertension and pre-operative evaluation that show use of V02 max can help guide risk or treatment.

Conflation and the V02 Max Craze

The leading proponent of using V02 max in recent years has been Peter Attia, through his podcast The Drive and book Outlive. He has consistently asserted “V02 max is the singular most powerful marker for longevity.” But the problem is conflation. He cites all of the studies of CRF without measuring V02 max and extrapolates to a V02 max result (see side-by-side Kokkinos study Table and Outlive Figure footnote below) and throughout his discussion of exercise in Chapter 11 of Outlive. For example, he writes: “this number [V02max] turns out to be highly correlated with longevity” citing all studies that did not measure V02 max.

In a recent YouTube video by Joseph Everett and Nick Norwitz entitled “Hidden Data: How the Top Longevity Doctor Tricked Us All” there is a segment about V02 max and this significant issue of conflation, discussed by Chris Masterjohn. Below is the relevant 6 minute clip within the longer video. It includes a bit of the 60 Minutes segment with CBS correspondent Nora O’Donnell doing a V02 max text and Peter’s assertion: “We don’t have a single metric of humans that we can measure that better predicts how long they will live than how high their V02 max is.”

As Masterjohn aptly points out, the fixation on V02 max, which is not actually supported by the data, also misses out on our ideal goal of diversity of exercise, including strength and balance training. Indeed, Kim et al, analyzing over 70,000 UK Biobank participants, for both CRF (submaximal bicycle test) and grip strength with all-cause mortality and concluded: “Improving both CRF and muscle strength, as opposed to either of the two alone, may be the most effective behavioral strategy to reduce all-cause and cardiovascular mortality risk.”

Bottom Line

I’ve never done a V02 max and see no reason to do it with the issues of cost, inconvenience, and the pain. As Attia correctly states about going to maximal exhaustion to get V02 max: “If you’ve ever had this test done, you will know just how unpleasant it is.” For spartan, Olympic, high performance athletes who are in high intensity training, or in patients with heart failure or pulmonary hypertension there may be a place for serial V02 max measurements, providing highly objective “goal standard” physiologic metrics. Otherwise, there are no supportive data for people going out and getting a V02 max and making this the focus of their exercise training. That is the reason I didn’t even mention V02 max in my Super Agers book.

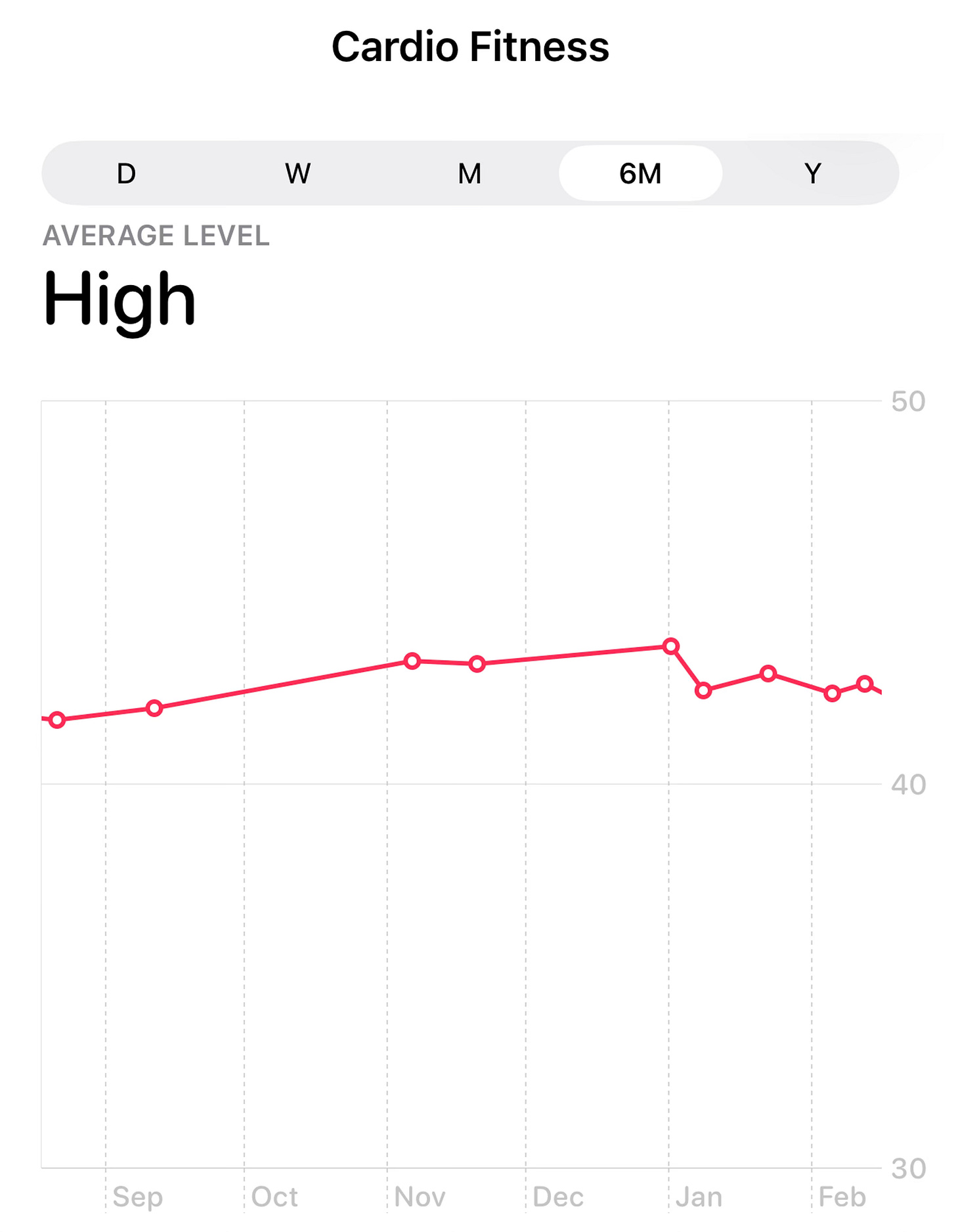

Nearly all of the relevant data related to outcomes are based on exercise on a treadmill or bicycle with METS as the index of cardiorespiratory fitness. We should not be placing much value on our smartwatch data. My Apple Watch gave me encouraging high V02 max data over 6 months to suggest my CRF is well above people in my age group (70+, see reference standards above) but I know the data is woefully unreliable.

The problem now, with so much misplaced hype on V02 max, is that most people are using their smartwatch output for gauging their cardiorespiratory fitness, like the 2 patients I mentioned at the top of the post. It’s free to calculate your METS! And that is the real basis of the relationship to all-cause and cardiovascular mortality that has been firmly established in the peer review literature.

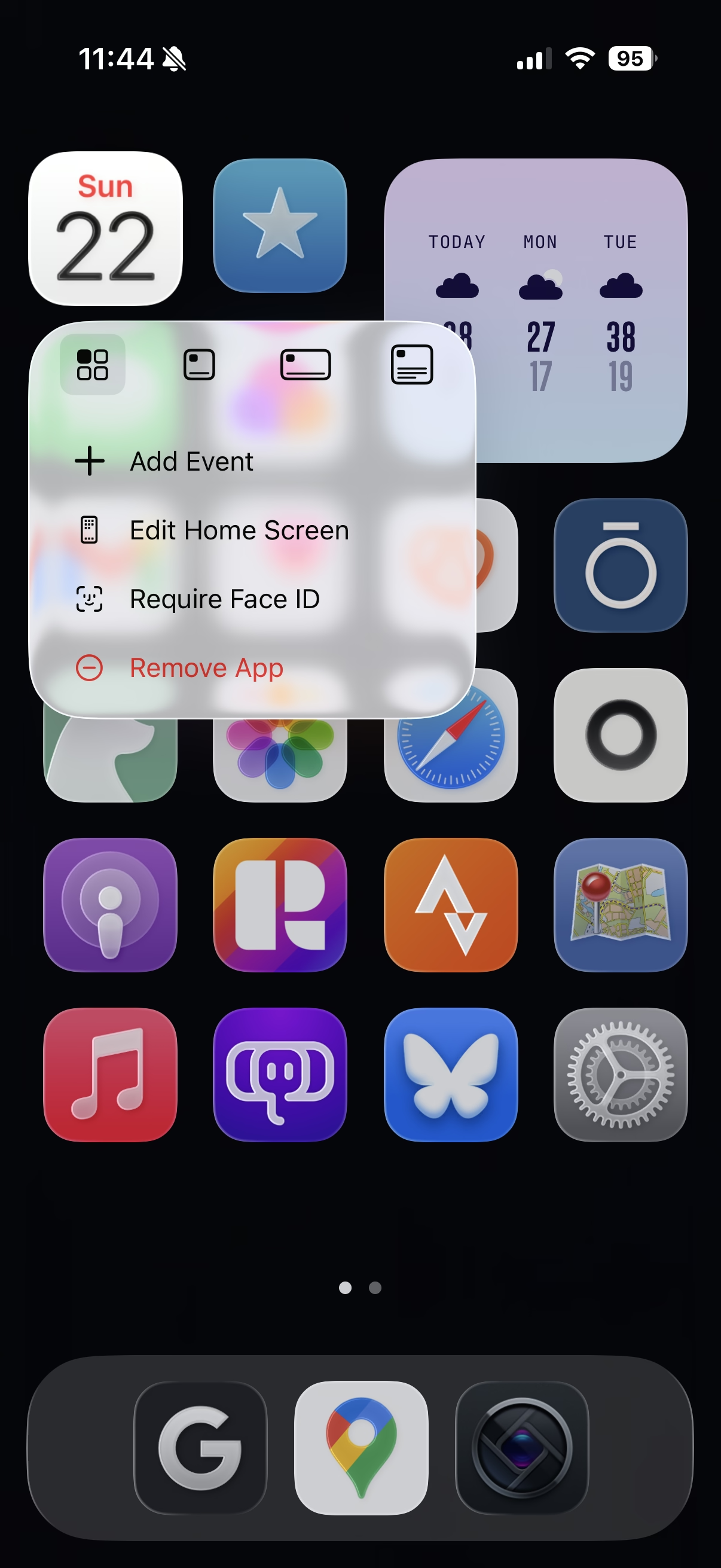

This problem surfaced recently with the introduction of ChatGPT Health. Geoffrey Fowler, the tech journalist at the Washington Post, submitted all his Apple Health and asked for an overall assessment of his health (actual prompt: “give me a single score (A-F) for my cardiovascular health over the last decade including component scores and an overall evaluation of my longevity.”) It gave him an “F.”

Then he gave ChatGPT Health his electric medical record access (portal) and asked the same question again. It gave him a “D’ and attributed that to his V02 max data of 34 ml/kg/min in the past year, below a 45-50 year old male! He also entered his Apple Watch data to Claude Health and it gave him a D+ status Specifically, Claude Health gave him a C- because his V02 max had declined from 41 to 32 ml/kg/min from 2016-2026. But the had over 7,500 step/day throughout the decade.

These outputs are indicative of the problem—the unreliable wearable V02 max data have become unduly emphasized by current AI platforms using smartwatch data! That will only make the problem worse, adding to the confusion, conflation, and emphasis on the wrong metric.

I hope this post helps to sort out what we know and that the datasets for cardiorespiratory fitness, representing real world physical activity— not V02 max —are the basis of the link to improved survival and freedom from cardiovascular mortality.

If we’re going to focus on a metric it ought to be METS, not V02 max. Not only is it free, simple and universally available, but it is the one best studied for health outcomes. And perhaps the better strategy is to be as physically active as possible and not worry about any metric!

NB: No AI was used in any way to write this post. As mentioned in the caption, I got help from Gemini-3 to produce the first Figure. I have nothing to do, no COI, with any company working with cardiopulmonary fitness or V02 max.

********************************************************************

Thanks to Ground Truths subscribers (> 200,000) from every US state and 210 countries. Your subscription to these free essays and podcasts makes my work in putting them together worthwhile. Please join!

If you found this interesting PLEASE share it!

Paid subscriptions are voluntary and all proceeds from them go to support Scripps Research. They do allow for posting comments and questions, which I do my best to respond to. Please don’t hesitate to post comments and give me feedback. Let me know topics that you would like to see covered.

Many thanks to those who have contributed—they have greatly helped fund our summer internship programs for the past two years. It enabled us to accept and support 47 summer interns in 2025! We aim to accept even more of the several thousand who will apply for summer 2026.