Hello!

Today’s email is a little longer than usual—though I like it and it has pictures. Feel free to just stay for the sketch as always.

Jono

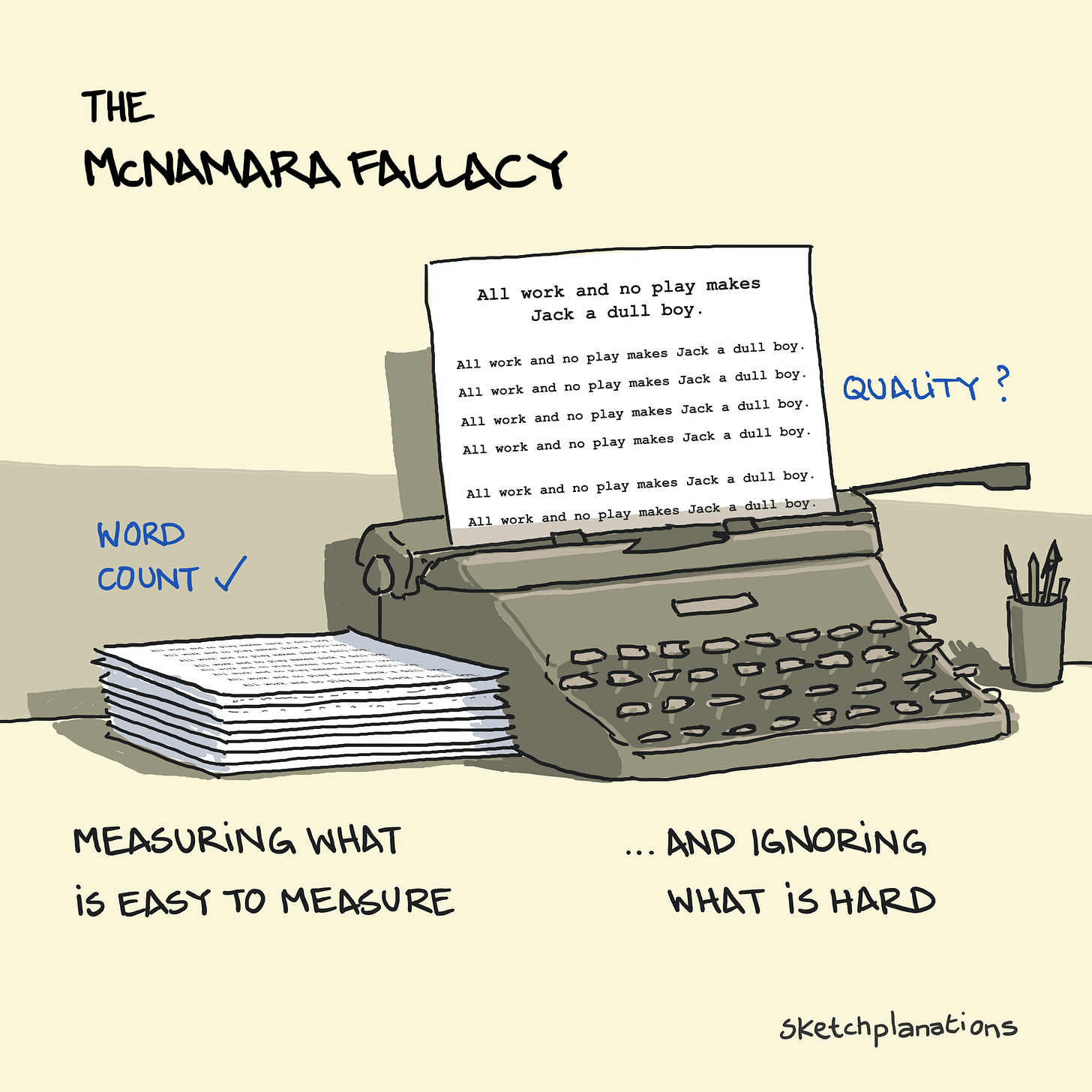

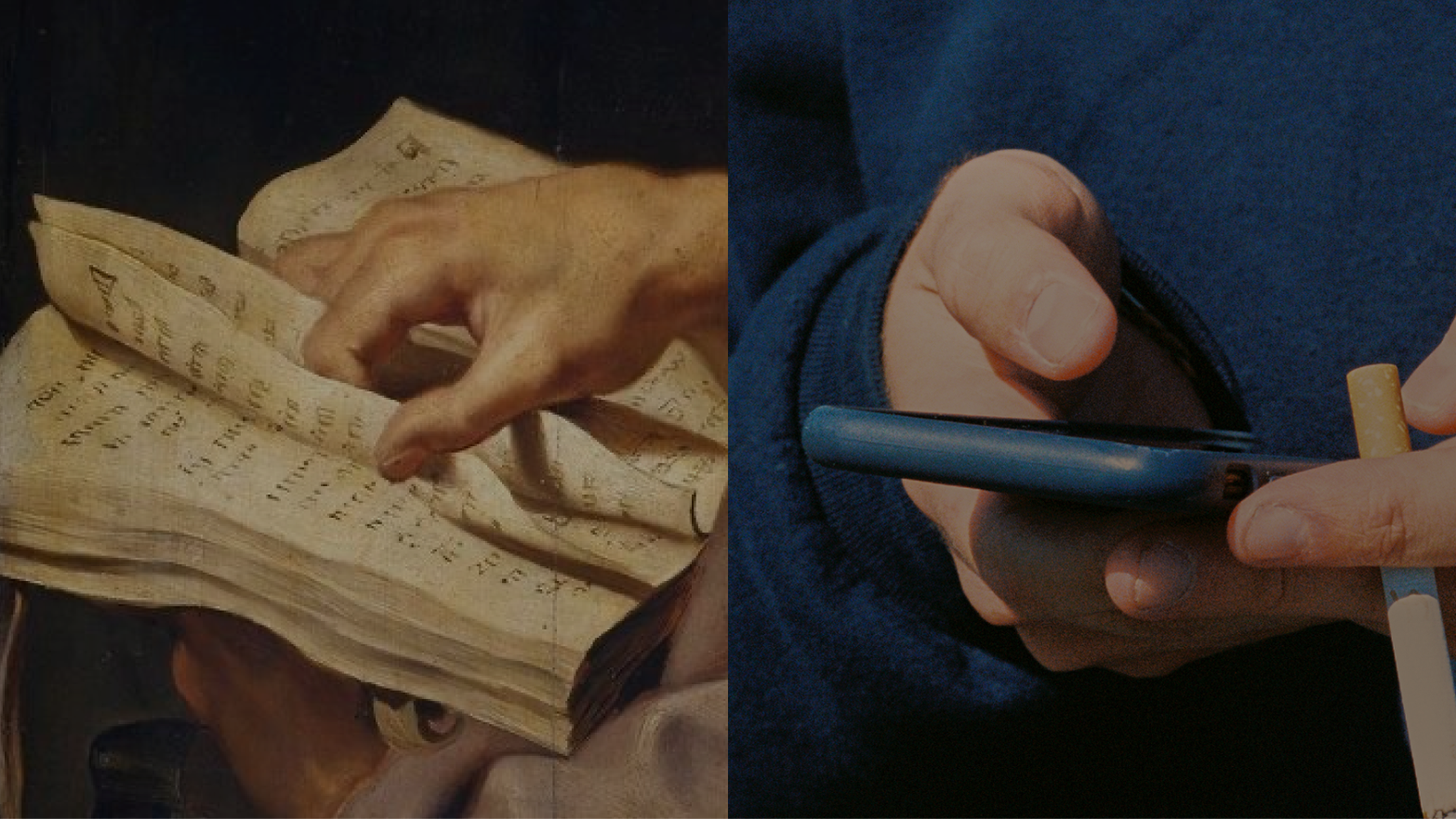

The McNamara Fallacy is a belief in easy-to-measure quantitative metrics at the expense of ignoring hard-to-measure qualitative factors.

Robert McNamara was president of Ford Motor Company and later the Secretary of Defense for the USA during much of the war in Vietnam. He was highly intelligent and excelled at dealing with data and using it to inform strategy.

Coined by social scientist Daniel Yankelovich, the McNamara Fallacy, also called the Quantitative Fallacy, involves:

Prioritising easier-to-measure quantitative metrics and

Disregarding hard-to-measure qualitative metrics as unimportant

The fallacy can progress as follows:

Measure what can be measured

Disregard what we can’t measure

Assume what cannot be measured is not important

Conclude that what can’t be measured doesn’t exist

In the words of Yankelovich:

“The fallacy is: If you’re confronted by a complex problem that is full of intangibles, you decide to measure only those aspects of the problem that lend themselves to easy quantification, either because you find other aspects difficult to measure or because you assume that they can’t be very important or don’t even exist.” …

“It is a short, fatal step from the statement, ‘There are many intangibles and imponderables that we can’t put on our computers,’ to the statement, ‘Let’s measure what we can and forget about the intangibles.’”

Yankelovich cites working with Ford during McNamara’s time and sharing research that included both quantitative and qualitative factors. As the research was assessed, the qualitative data on the meanings people gave to small cars were discarded, and the less significant quantitative and demographic data were retained.

Examples of the McNamara Fallacy

I first learned about the fallacy from a reader’s article about one of the easiest to measure aspects of a bike: its weight. All things being equal, we’d probably prefer a lighter bike, but other aspects like maintenance, reliability, and handling are important yet harder to measure, report on and compare. As a result, weight often comes to the fore at the expense of the others.

Other examples might include:

Perhaps your hiring time is down, but how is the fit of the people you’re bringing in?

Maybe more people are visiting your website, but they aren’t the right people for your service.

We can calculate a country’s GDP, but GDP doesn’t account for human labour without a monetary transaction—like a home-cooked meal—and vital work done by nature, like filtering water, sequestering carbon, or lifting spirits.

If food in a can gives you all the nutrients you need, what are you missing by skipping family meals?

A commonly cited example of the McNamara Fallacy is the US military’s approach to measuring progress in the Vietnam War.

The McNamara Fallacy and the Vietnam War

As the US Secretary of Defense from 1961–1968, McNamara employed similar techniques to those he had used successfully in business contexts to assess the progress of the war in Vietnam.

If wars were won by inflicting damage on the enemy, then metrics measuring the damage inflicted should be decent proxies. In particular, assessing body count evolved to be the primary measure of progress.

Reliance on purely quantitative metrics had significant shortcomings. In this context, enemy body count was more easily quantified than their morale, political support, or resolve to defend. Because of the interest in this measure, many officers believed that it was also often inflated (see Goodhart’s Law and Campbell’s Law below).

Measuring the tons of explosives dropped is easier than measuring the reduction in capabilities they caused. Knowing the number of troops you have is easier to measure than the abilities of those troops.

According to the numbers, the U.S. was winning the war, yet it failed to overcome the resistance of North Vietnam.

Related Ideas to The McNamara Fallacy

An over-reliance on quantitative metrics quickly leads to several other related problems to deal with:

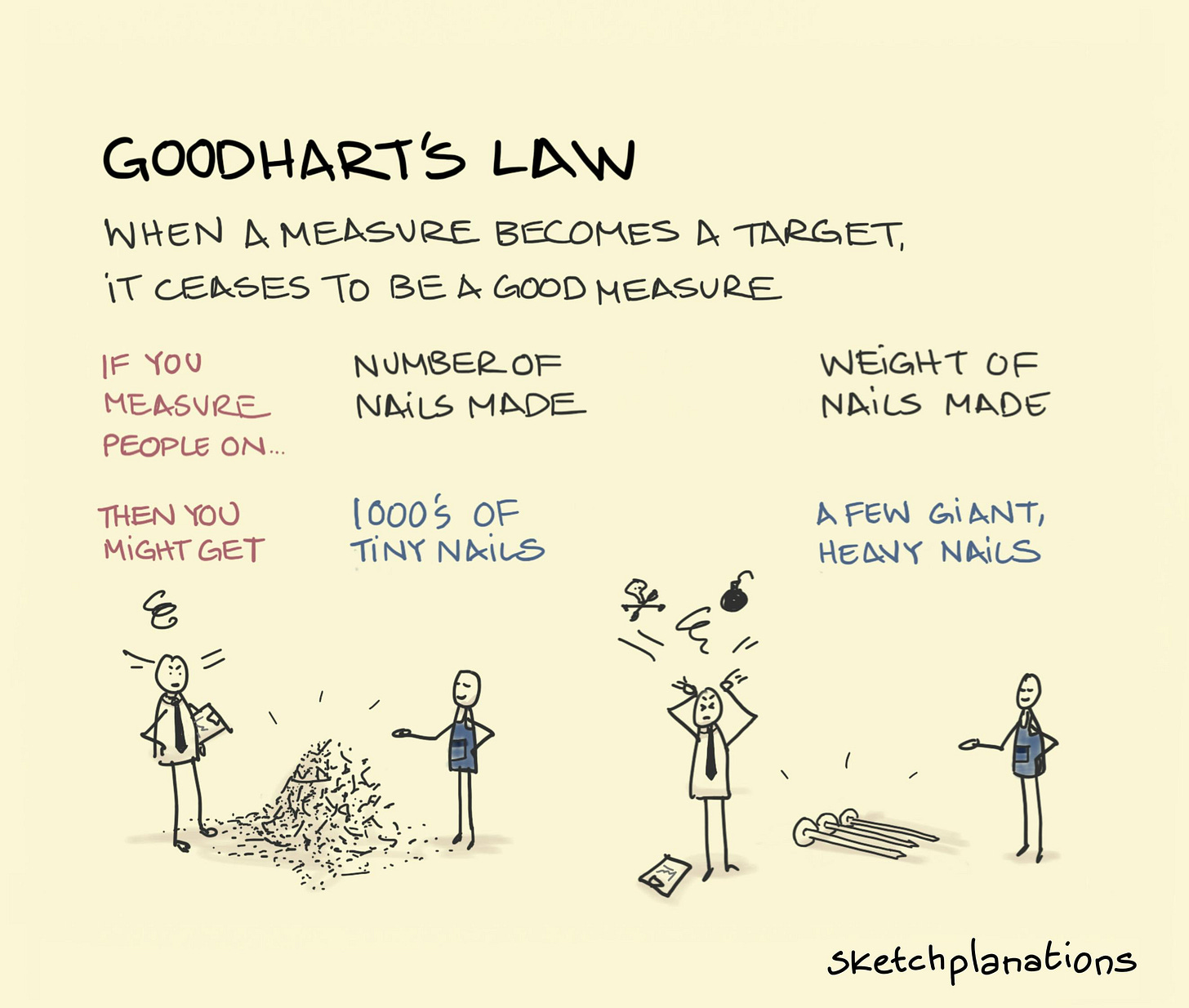

Goodhart’s Law: When a measure becomes a target, it ceases to be a good measure.

For example, assessing the progress of war on the numbers of enemy dead may lead to increased killing to inflate numbers. Or pinning a bonus on review ratings may lead to fake reviews.

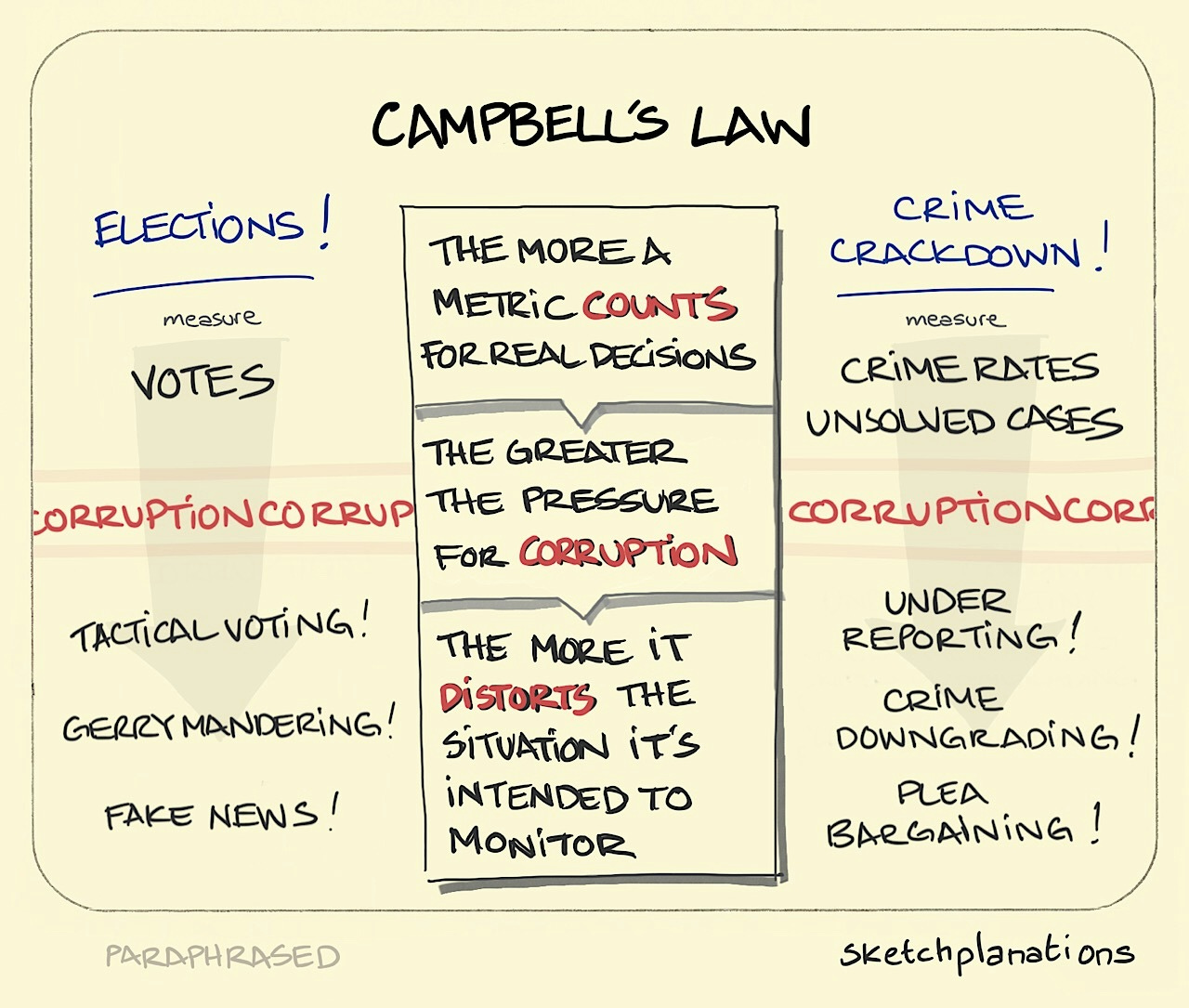

Campbell’s Law: The more a metric counts for real decisions, the greater the pressure for corruption, and the more it distorts the situation it’s intended to measure.

For example, if reducing crime rates matters for law enforcement jobs, it creates an incentive to under-report cases or downgrade crimes.

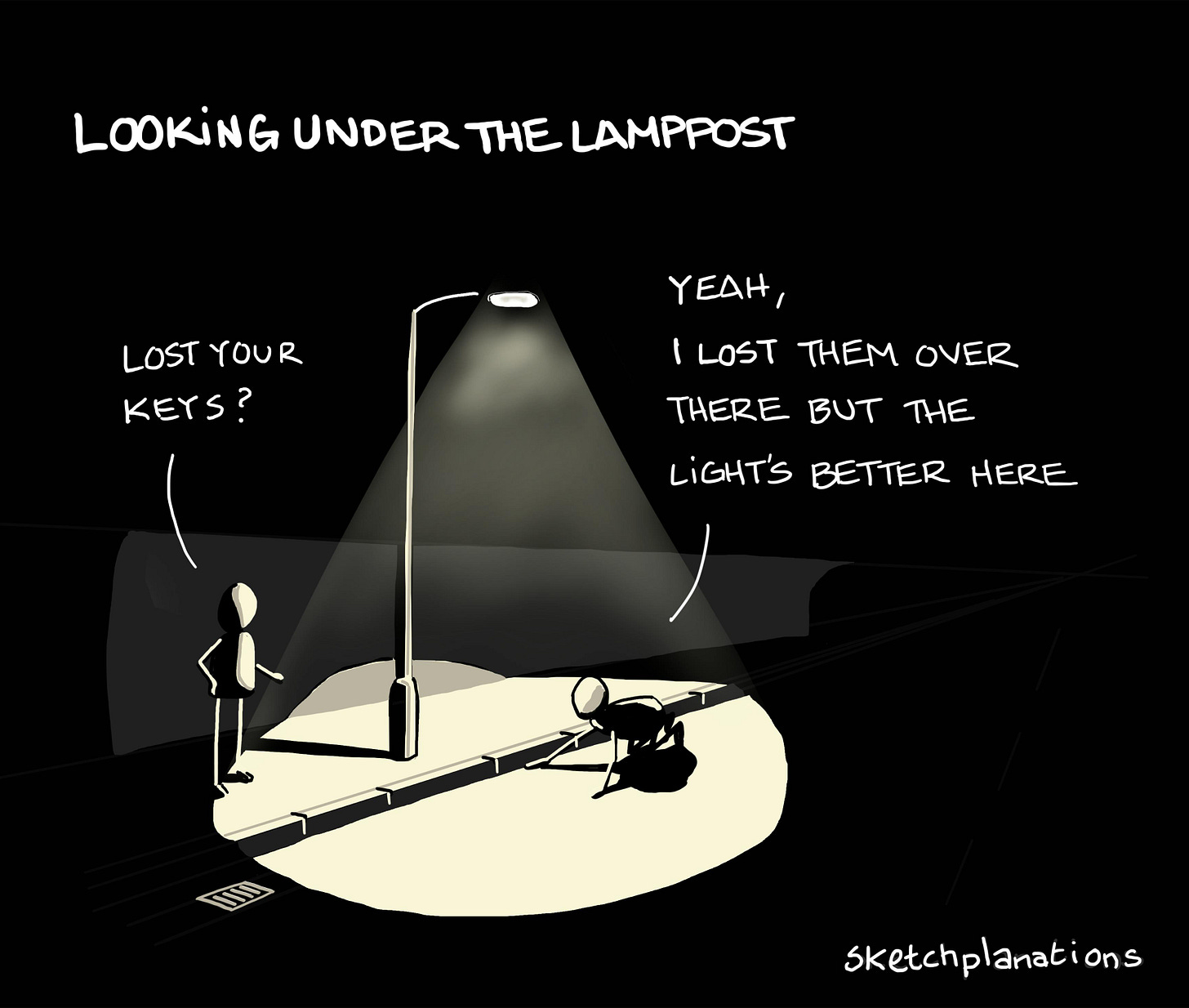

The Streetlight Effect: Looking where it’s easiest to look, rather than where it matters. Also known as the drunkard’s search or looking under the lamppost, the Streetlight Effect is named after the economists’ joke of a person scrabbling on the ground for their car keys under a street light. When asked where they lost them, the person says they dropped them “over there,” but the light’s much better over here.

For example, optimising an existing product because it is known and does well, rather than working on an uncertain new product.

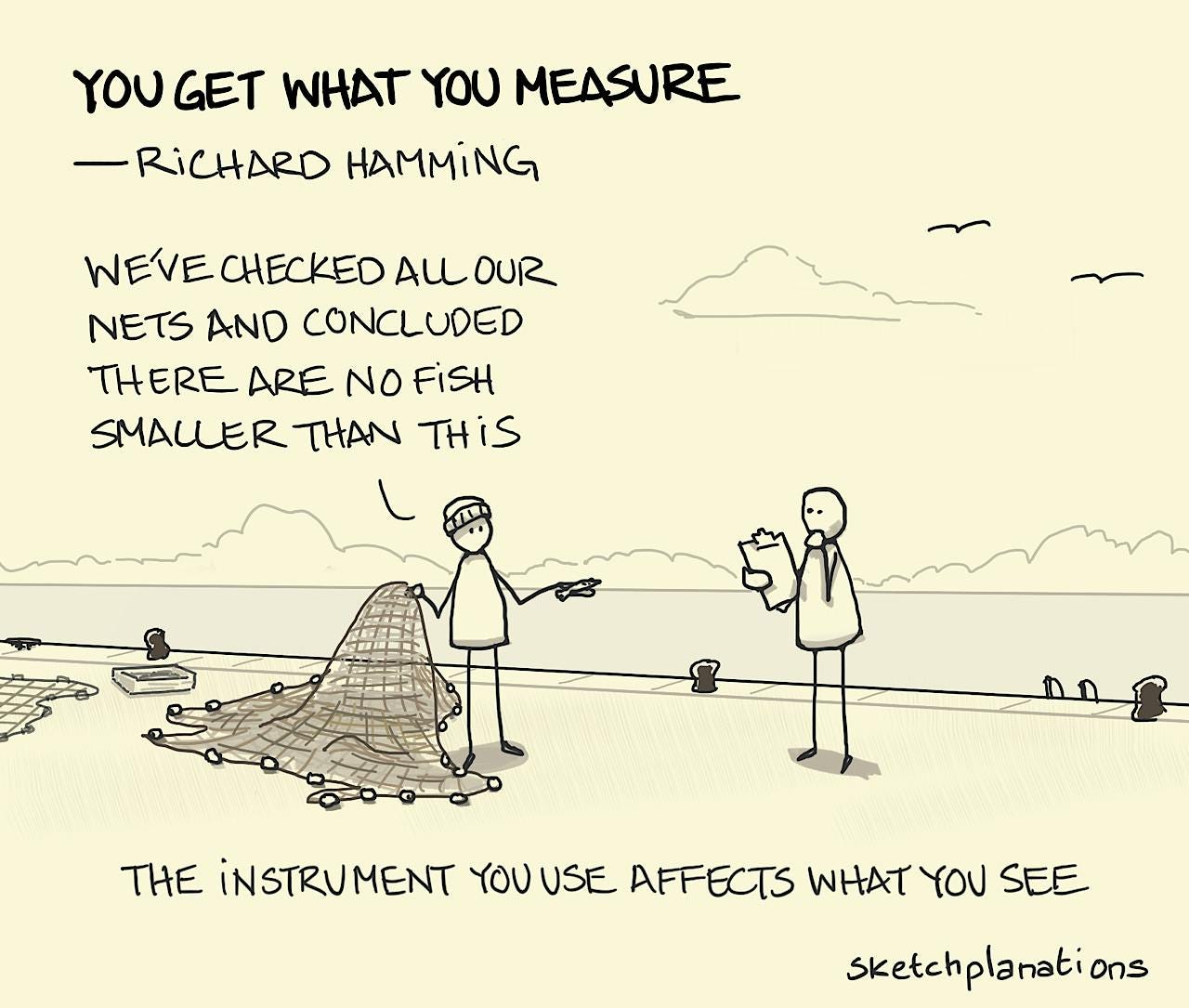

You Get What you Measure: The instrument you use to measure affects what you see.

“For example, in school it is easy to measure training and hard to measure education, and hence you tend to see on final exams an emphasis on the training part and a great neglect of the education part.”—Richard Hamming

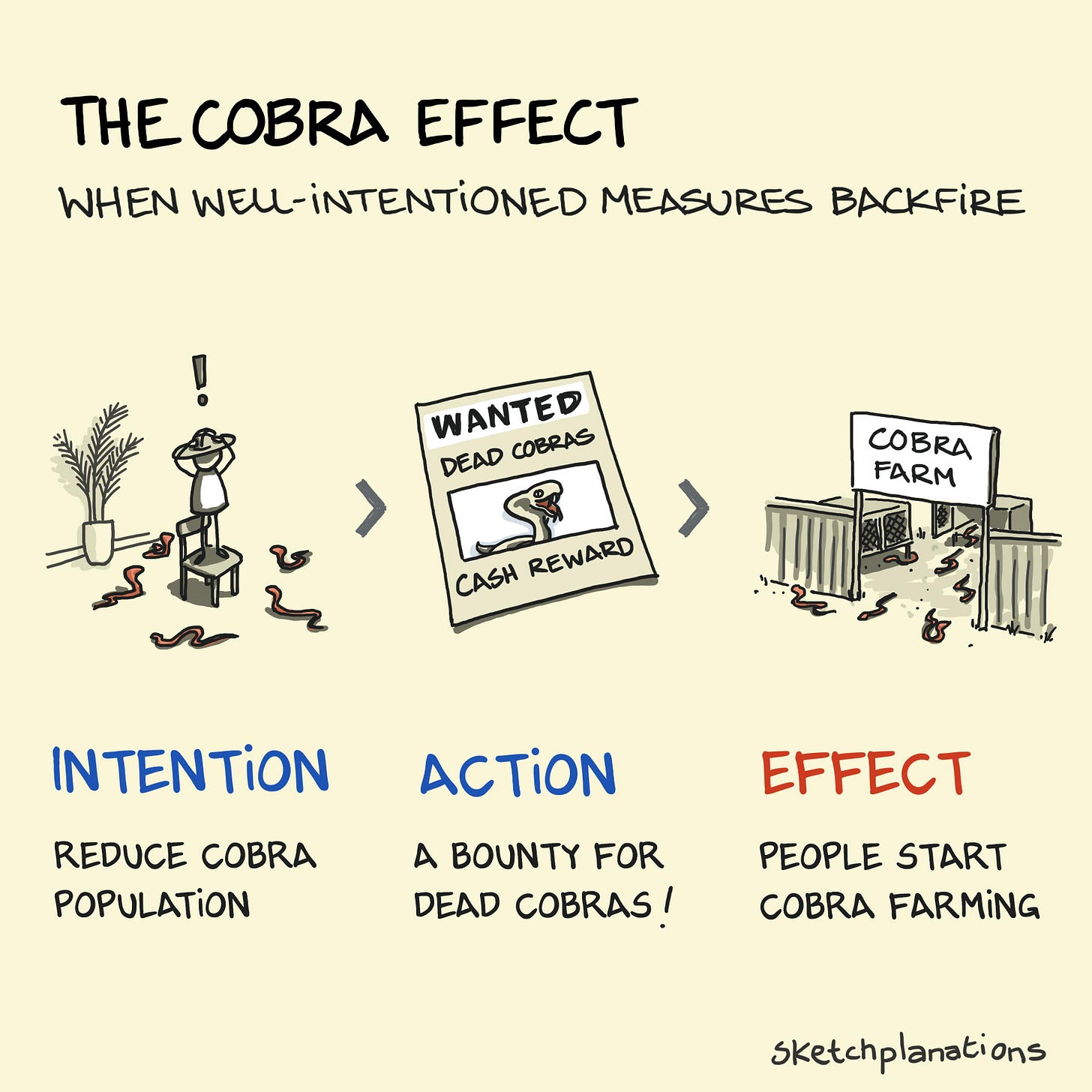

The Cobra Effect: When an implemented policy backfires causing the opposite of the intended outcome. Named after a British attempt to reduce cobras in Delhi by introducing a bounty on dead cobras. Seeing the lucrative bounty, people started farming cobras thereby increasing their numbers.

For example, the Streisand Effect is a well-documented case of a cobra effect when Barbara Streisand tried to suppress images of the coast that included her house. The attention drawn to her attempts to suppress the images brought thousands of people to look at them who otherwise would never have considered it.

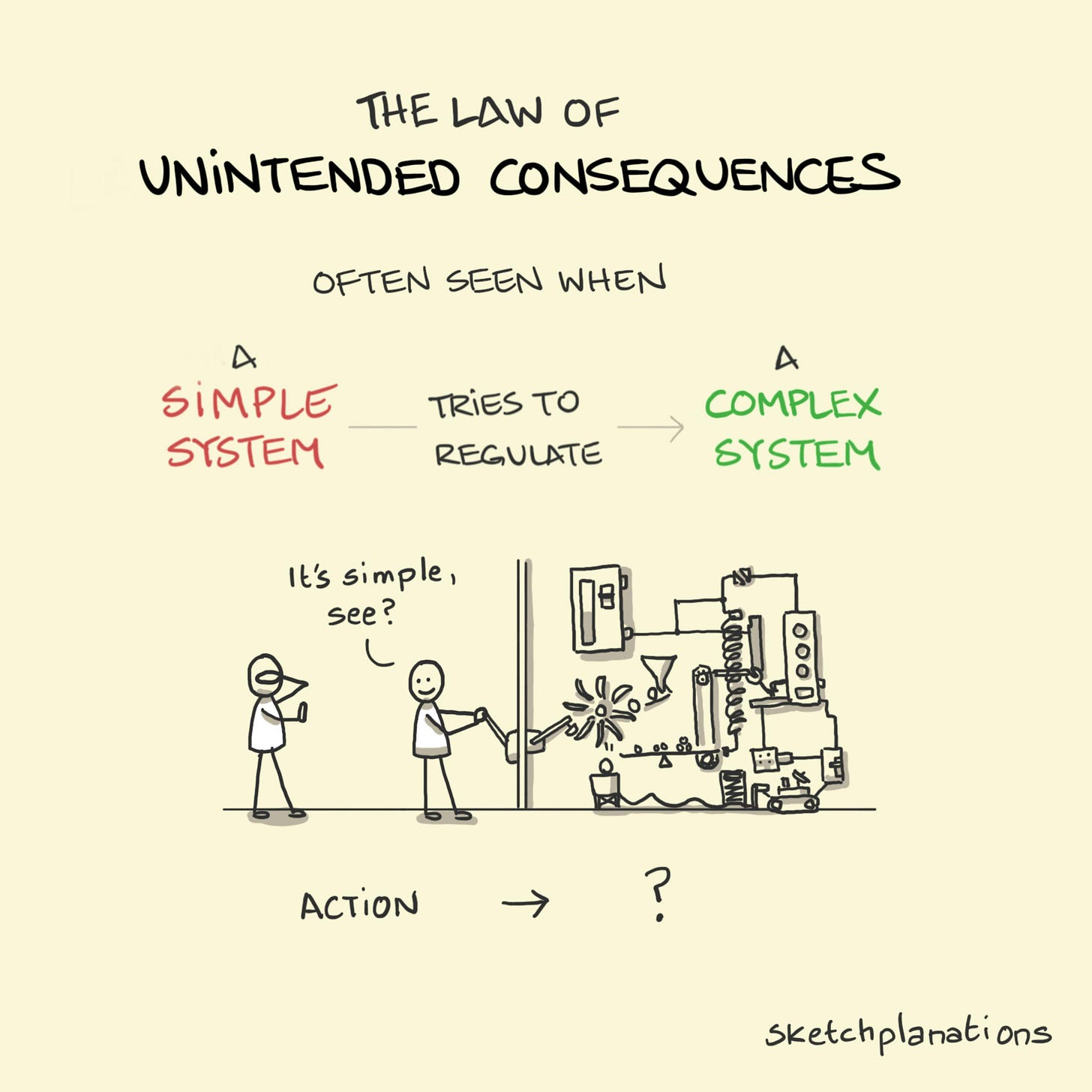

And all of these are examples of the broader Law of Unintended Consequences, which comes from trying to regulate a complex system with a simple system.

If the ideas above aren’t enough, you might also see:

In theory, practice is the same as theory, but not in practice

All models are wrong but some are useful (from last week)

Reliability vs validity: You can hit the target but miss the point.

The map is not the territory (no sketch!)

For a super discussion on the measurement of all sorts of things, including acute (being run over by a bus) and chronic risks (smoking), I recommend the fun podcast episode: Microlives & The Art of Uncertainty with Sir David Spiegelhalter

The article about bike weight is: The McNamara Fallacy and Bikes by Peter Verdone

Daniel Yankelovich, who named the McNamara Fallacy, stressed no disrespect to “one of our most distinguished citizens,” Robert McNamara, “a brilliant and dedicated man who brings a vital intensity to bear on his work.”

Some of the complexities of the war and McNamara are covered in the Academy Award-winning documentary The Fog of War, which makes fascinating and, at times, uncomfortable viewing.

/uploads/Robot_Hand_laptop.jpg)

/uploads/broken-robot-hand.jpg)